Edge Computing and AI: Scaling Business Infrastructure

The centralized cloud computing model that powered the digital economy for the past two decades has reached its structural limits. As we navigate through May 2026, the massive explosion of real-time data generated by internet-of-things (IoT) devices, industrial automation systems, autonomous vehicles, and real-time customer touchpoints has created an unsustainable burden on global data networks. Sending terabytes of raw data back and forth from a localized device to a distant centralized server farm creates immense latency, drives up bandwidth costs, and introduces significant cybersecurity vulnerabilities.

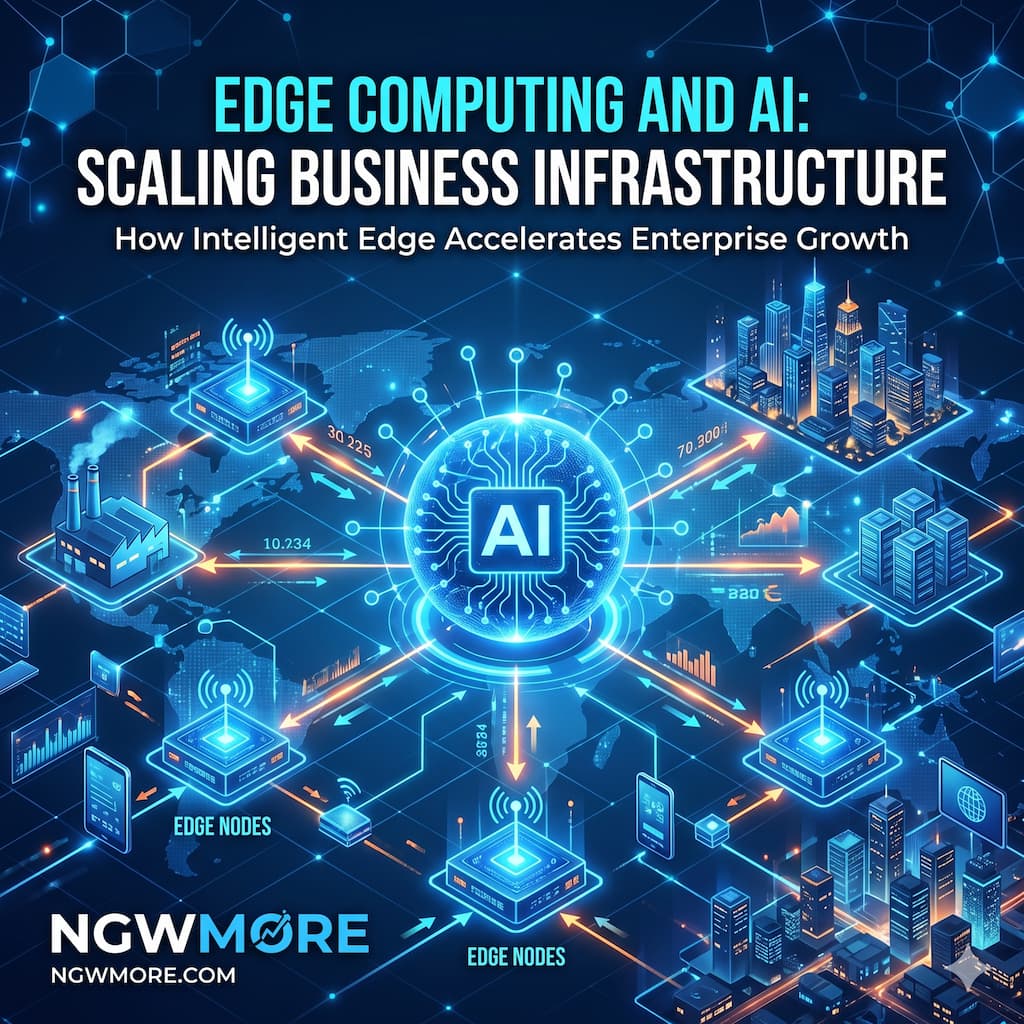

For the digital entrepreneurs, web administrators, and technology strategists at ngwmore.com, scaling a business infrastructure in 2026 requires a fundamental architectural shift. The solution driving the next generation of enterprise efficiency is the convergence of Edge Computing and Artificial Intelligence (Edge AI).

By pushing computational power, machine learning algorithms, and agentic reasoning out of the centralized cloud and directly onto local edge infrastructure—closer to where the data is actually generated—businesses are unlocking unprecedented operational velocity, absolute data sovereignty, and a non-linear path to scaling global operations.

1. The 2026 Paradigm Shift: The Emergence of Edge AI

To successfully scale your digital or physical operations this year, you must understand the technological leap that occurred as we entered 2026. We have moved past the era of “dumb” edge nodes that simply collect and forward raw data. Today, the edge is a highly intelligent neural network.

CENTRALIZED CLOUD MODEL (Past) EDGE AI ARCHITECTURE (2026)

┌──────────────────────────────┐ ┌──────────────────────────────┐

│ Raw Data Generated at Edge │ │ Raw Data Generated at Edge │

└──────────────┬───────────────┘ └──────────────┬───────────────┘

│ │

[High-Latency Transit] [Sub-Millisecond Processing]

│ │

▼ ▼

┌──────────────────────────────┐ ┌──────────────────────────────┐

│ Central Cloud Processes Data │ │ Edge Node Executes Inference │

└──────────────┬───────────────┘ └──────────────┬───────────────┘

│ │

[Delayed Action Sent] [Immediate Local Action]

│ │

▼ ▼

┌──────────────────────────────┐ ┌──────────────────────────────┐

│ Physical Device Takes Action │ │ Cloud Receives Only Metadata │

└──────────────────────────────┘ └──────────────────────────────┘

The Death of Latency

In 2026, business operations require Zero-Latency Response. Whether it is an autonomous delivery drone navigating a crowded urban environment, a computer vision camera identifying a production defect on a high-speed manufacturing line, or a personalized retail mirror rendering an augmented reality outfit for a consumer—waiting 500 milliseconds for a cloud response means failure. Edge AI enables localized inference execution in under 5 milliseconds, allowing machines to make complex, data-driven decisions in real-time.

The Rise of Edge-Optimized Silicons

This revolution is fundamentally driven by hardware breakthroughs. In 2026, specialized system-on-chips (SoCs) equipped with highly efficient, low-power Neural Processing Units (NPUs) are embedded into everyday business hardware. These NPUs are designed specifically to run dense, quantized machine learning models locally, drawing fractions of the power required by traditional server GPUs while delivering tera-operations per second (TOPS) directly at the source.

2. Core Pillars of Scalable Edge AI Infrastructure

Building an enterprise infrastructure capable of handling the demands of 2026 requires a deep understanding of the four operational pillars of Edge AI.

I. Data Truncation and Bandwidth Optimization

Data is expensive to move. In 2026, cloud service providers charge premium rates for outbound data transfers and massive database storage pools. Edge AI optimizes this by performing local data filtration. Instead of streaming continuous, high-definition video feeds from a site’s security layout to the cloud, the on-camera Edge AI processes the feed locally. It deletes the millions of “empty” frames where nothing happens and only transmits small packets of high-value metadata when a specific safety or operational anomaly is detected. This reduction in data transit slashes network bandwidth costs by up to 85%.

II. Absolute Data Sovereignty and Privacy Compliance

With the full implementation of stringent privacy regulations worldwide—such as the EU AI Act, updated GDPR frameworks, and localized state data mandates—moving sensitive consumer data across regional boundaries introduces immense legal risk.

- The Solution: Edge Computing naturally solves this compliance puzzle by keeping the data localized. A medical device or a retail payment terminal can analyze biometric features, process financial intent, and execute personalized outcomes without ever transmitting the user’s raw, un-encrypted personal data to an external cloud database, neutralizing the risk of mass data breaches.

III. Operational Resilience and Offline Autonomy

A business reliant entirely on a centralized cloud is highly vulnerable to network blackouts, fiber optic cuts, and cloud provider downtime. Edge AI structures provide vital system insulation. Because the machine learning models and operational logic live directly on the local edge nodes, factory floors, supply chain systems, and automated storefronts can continue to operate with full analytical capabilities even if the primary internet connection drops completely, syncing metadata back to the cloud only when connectivity is restored.

IV. Federated Learning and Decentralized Training

In 2026, AI models are no longer static; they continuously learn from their environment. Through Federated Learning, decentralized edge nodes train local components of an algorithm using localized data inputs. The edge nodes then upload only the model weight updates (the mathematical learnings) to a centralized system. The central cloud aggregates these global weights to improve the master AI model and redistributes the smarter algorithm back down to the edge. This allows the system to scale its intelligence exponentially without ever centralizing raw corporate data assets.

3. High-Performance Sectors Utilizing Edge AI in 2026

The convergence of Edge Computing and AI is altering operations across every major industrial and commercial domain this year.

| Industrial Domain | Primary Edge AI Application | 2026 Impact Metric | Core Technological Asset |

| :--- | :--- | :--- | :--- |

| **Smart Manufacturing** | Real-time predictive maintenance & defect spotting | 40% Reduction in Downtime | Sensor fusion with Edge NPUs |

| **E-Commerce & Retail** | Hyper-personalized local digital visual experiences | 25% Increase in Convs | Edge-rendered AR/VR engines |

| **Logistics & Fleet** | Autonomous route planning & obstacle avoidance | 30% Efficiency Gain | Localized computer vision & LiDAR |

| **Critical Infrastructure** | Autonomous grid balancing & asset health sensing | 50% Speed-to-Alert Lift | Edge-native anomaly detection |

1. Smart Retail and Autonomous Commerce

As many readers of ngwmore.com manage multi-platform e-commerce stores and omni-channel setups, Edge AI represents the bridge to physical-digital integration. In 2026, physical interactive displays utilize localized computer vision to instantly identify consumer demographic parameters and engagement levels, dynamically re-rendering the on-screen digital assets and item recommendations to match the specific viewer’s profile instantly.

2. High-Speed Industrial Automation

On the modern factory floor, precision is measured in micrometers. Edge AI nodes process continuous acoustic, vibration, and thermal streams directly from machinery. By running local predictive analytics at the device level, the system detects micro-frictional variances that indicate an impending tool fracture, autonomously slowing down the robotic assembly line within milliseconds to prevent structural damage.

Continues after advertising

4. Architectural Stack: Building Your Edge AI Infrastructure

To transition your enterprise network from a centralized configuration to an intelligent edge architecture in 2026, you must implement a robust, multi-layered technological stack.

Layer 1: Hardware and Silicon Accel

Deploy devices built with native NPU accelerators. This includes enterprise edge gateways equipped with NVIDIA Jetson architectures, Intel OpenVINO-compatible processing units, or high-performance edge servers utilizing specialized ARM-based infrastructure.

Layer 2: Model Quantization and Compacting

You cannot run a massive, 100-billion-parameter large language model on a localized edge gateway. In 2026, developers use advanced Model Quantization and distillation techniques. By converting 32-bit floating-point weights into 8-bit integers, developers shrink models significantly, allowing highly precise, verticalized AI algorithms to run flawlessly within the constrained memory environments of edge devices.

Layer 3: Edge Orchestration Software

Managing a distributed network of 5,000 intelligent edge devices requires centralized management software. Enterprise platforms like KubeEdge, AWS IoT Greengrass, or Azure IoT Edge allow network administrators to push algorithm updates, monitor device health, and allocate computing resources across a global network of edge nodes seamlessly from a single terminal.

5. Tactical Challenges: Navigating the 2026 Hurdles

While the operational returns of Edge AI are exceptional, infrastructure scaling introduces unique structural hurdles that require careful risk management:

- The Edge Security Vulnerability: Unlike a highly secure, physically locked-down cloud data center, edge nodes (like security cameras, outdoor gateways, or retail kiosks) live in the physical world. They are vulnerable to physical tampering, theft, and localized hardware hacking. Administrators must enforce robust Zero-Trust Network Access (ZTNA) architectures, ensuring that if a single edge node is compromised, it is instantly isolated from the broader corporate directory.

- Hardware Fragmentation: Managing an infrastructure composed of different hardware generations, silicon vendors, and sensor protocols can lead to massive configuration debt. Organizations must prioritize open-source, vendor-agnostic software abstractions to ensure fluid cross-compatibility over long operational lifecycles.

- Model Drift Management: Because edge nodes operate in distinct environments, an AI model running in an industrial plant in Brazil may experience different environmental inputs than one operating in Germany. Continuous remote monitoring is mandatory to catch performance degradation or model drift over time.

6. Strategic Implementation Roadmap

Ready to transition your business infrastructure to the intelligent edge? Follow this 3-step deployment plan:

Step 1: Execute a Data & Latency Audit

Map your entire business operational workflow. Identify the areas where latency damages conversions, where bandwidth costs are draining your capital, or where network dependency introduces systemic vulnerability. These high-friction zones are your primary targets for Edge AI integration.

Step 2: Implement an Edge-Native Pilot Campaign

Select a high-impact, bounded project—such as deploying localized object recognition cameras on a single logistics loading dock or implementing predictive maintenance analytics on a primary server cluster. Quantize your models, deploy them onto verified edge gateways, and benchmark your local processing speed and bandwidth savings directly against your legacy cloud architecture.

Step 3: Establish Centralized Governance for Scale

Once your pilot project demonstrates clear ROI, establish your centralized edge orchestration layer. Automate your deployment playbooks so that adding your next 100 edge nodes is as simple as connecting them to power, allowing the central network manager to securely authenticate, update, and govern the new assets automatically.

Read More⚡ AI for Retail: Personalizing the Customer Journey 2026

Conclusion: The Distributed Future of Business

The centralized cloud paradigm was a vital stepping stone, but the business future belongs to the distributed, intelligent edge. Edge Computing and AI have combined to create an enterprise model that is faster, more cost-efficient, structurally resilient, and fundamentally compliant with the global data privacy landscape of 2026.

For the ngwmore.com community, the core takeaway is clear: True scale requires decentralizing your intelligence. By pushing your data processing, analytical insights, and automated actions directly to the physical perimeter of your operations, you liberate your enterprise from network limits, protect your core digital assets, and position your brand to scale at machine speed.

The data is everywhere, the processing must be too. Is your infrastructure positioned at the edge?