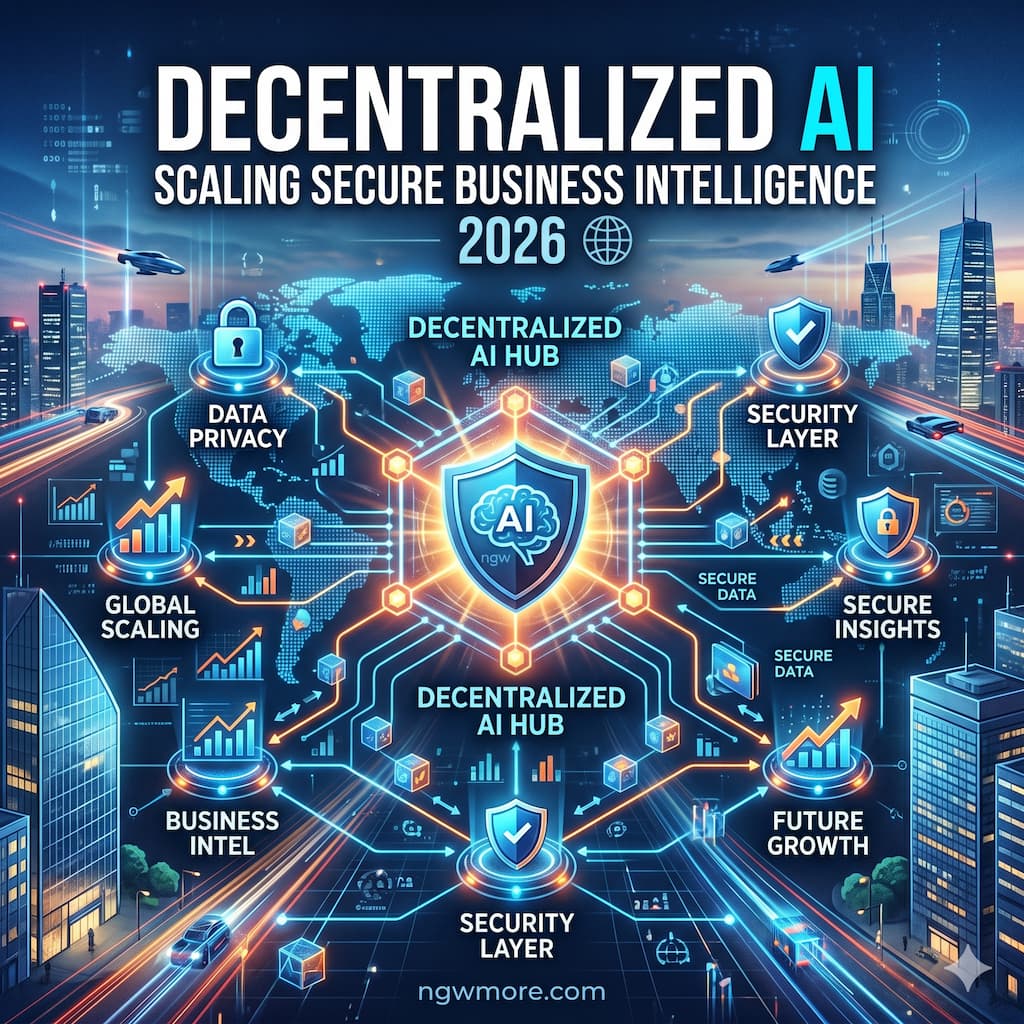

Decentralized AI: Scaling Secure Business Intelligence 2026

The centralized data architecture that underpinned the first wave of enterprise artificial intelligence has run into a hard wall. As we navigate through May 2026, corporate reliance on a few hyperscale cloud monopolies to process sensitive business data has evolved from a competitive convenience into an existential operational risk. Sending proprietary financial metrics, customer interaction histories, and supply chain telemetry to external, centralized server farms creates massive latency bottlenecks, spikes data egress costs, and exposes organizations to severe data breaches.

Concurrently, the global regulatory environment has tightened. With the strict enforcement of the EU AI Act, India’s DPDP Act, and updated GDPR frameworks, data sovereignty is no longer an optional compliance checklist—it is a baseline corporate mandate. Enterprises can no longer afford to treat AI as a “black box” where data goes in and un-verifiable outputs come out.

For the digital entrepreneurs, web administrators, and technology strategists at ngwmore.com, the solution driving the next generation of enterprise analytics is the convergence of Decentralized AI (DeAI) and Business Intelligence (BI).

By distributing machine learning models across decentralized nodes, utilizing cryptographic execution layers, and processing data natively at the edge, modern organizations are scaling their business intelligence capabilities exponentially. This structural pivot allows brands to extract high-value predictive insights while maintaining absolute data privacy and total infrastructure sovereignty.

1. The 2026 Shift: From Centralized Monopolies to Distributed Networks

To properly scale a secure business intelligence framework this year, you must understand the structural transition occurring across the enterprise software landscape. We have officially exited the experimental phase of AI integration and entered the era of Industrialized Governance.

CENTRALIZED STORAGE (Past) DECENTRALIZED DATA MESH (2026)

┌───────────────────────────────┐ ┌───────────────────────────────┐

│ Raw Proprietary Datasets │ │ Domain Data Product (Sales) │◄───┐

│ │ ├───────────────────────────────┤ │

│ ▼ │ │ Domain Data Product (Finance) │◄───┼── [Cryptographic]

│ Transmitted Across Network │ ├───────────────────────────────┤ │ [ Orchestration ]

│ ▼ │ │ Domain Data Product (Logistics)│◄──┘

│ Central Cloud Mono-Repository │ └───────────────────────────────┘

└───────────────────────────────┘ *Data Never Leaves its Local Environment

The Breakdown of the Data Warehouse Bottleneck

For years, the standard BI playbook dictated that all corporate data must be extracted, transformed, and loaded (ETL) into a single, massive cloud data warehouse before any analysis could occur. This centralized model has become a massive bottleneck. Corporate data teams are routinely overwhelmed by ad-hoc dashboard requests, and the financial drag of capacity-based data compute scales lineally with data growth.

In 2026, forward-thinking enterprises are transitioning to a Data Mesh architecture powered by Decentralized AI. Instead of moving data to the model, the model moves to the data. AI analytical agents operate directly within distinct, localized domain data environments—such as a localized e-commerce database, an on-premise inventory ledger, or an isolated financial tracking application—processing insights natively without ever exposing the raw underlying datasets to an external network.

2. Core Pillars of Decentralized Business Intelligence

Scaling a secure, decentralized BI framework in 2026 requires implementing four primary technological pillars into your corporate data stack.

I. Federated Learning for Cross-Organizational Synthesis

Historically, training an AI model to recognize macro industry trends required pooling raw data into a shared database. For highly competitive or regulated sectors—such as e-commerce, banking, and medical diagnostics—this was legally and strategically impossible.

In 2026, Federated Learning has solved this gridlock. Decentralized nodes download a master analytical model from a secure registry and train it locally using their private data assets. The nodes then upload only the model weight updates (the mathematical learnings) to a centralized coordinator. The coordinator aggregates these global weights to optimize the master model and redistributes the enhanced algorithm back down to the network. This allows multiple distinct business branches or non-competing industry partners to collaborate on macro predictive insights without ever exchanging private customer logs or transaction trails.

II. Zero-Knowledge Proofs (ZKPs) and Trusted Execution Environments

How do you verify that an independent, decentralized compute node actually ran an analytical query accurately without tampering with the results?

Modern DeAI infrastructures utilize a combination of Trusted Execution Environments (TEEs)—isolated, hardware-secure enclaves embedded within modern edge silicons—and Zero-Knowledge Proofs (ZKPs).

- The Mechanism: An AI agent executes a complex database analysis inside a cryptographic TEE enclave.

- The Output: The system outputs the analytical answer alongside a mathematically verifiable ZK-proof. This proof certifies to corporate compliance teams that the query was executed flawlessly against the correct dataset without revealing any underlying sensitive rows or private database schemas to the network operator.

III. Natural Language to SQL/DAX Agentic Analytics

The primary user interface for business intelligence in 2026 has completely shifted away from complex, static dashboards packed with confusing filters. Modern BI relies on Agentic Conversational Interfaces.

Instead of waiting for an analyst to build a custom report, non-technical business managers simply interact with an AI analytics agent using natural language. Connected to curated, decentralized semantic models, these autonomous agents interpret user intent, map natural language queries directly to clean SQL or DAX code, execute the query across local data buckets, and deliver tracing summaries in seconds.

IV. True Explainability and Traceable Lineage

As highlighted by recent 2026 technology tracking surveys, over 60% of enterprise AI implementations historically struggled due to a lack of institutional trust. Executives refuse to make multi-million dollar pricing or inventory choices based on un-verifiable “black box” model recommendations.

Modern decentralized BI platforms enforce Decision-Path Clarity. Every automated insight, forecast, or anomaly alert delivered by the AI agent comes baked with an immutable audit log detailing:

Continues after advertising

- The exact measures, tables, and behavioral relationships used to formulate the conclusion.

- Full lineage tracing back to the cryptographically verified source data blocks.

- Natural-language explanations of the underlying mathematical variables used to filter the metrics.

3. The 2026 Secure BI Stack: Leading Enterprise Platforms

To successfully implement a secure, distributed intelligence infrastructure on ngwmore.com, you must shift away from capacity-based legacy monopolies and deploy specialized, open-weight tools.

| Platform | Architectural Class | Best For | Standout 2026 Core Feature |

| Google BigQuery ML | Ecosystem Native | SQL-driven machine learning | Direct Model Embedding: Allows data analysts to train and run predictive models natively within distributed SQL clusters. |

| Civo / Sovereign Cloud Suites | Distributed Compute | Data sovereignty & GDPR compliance | Sovereign Infrastructure Isolation: Guarantees absolute physical isolation of model execution parameters. |

| Bold BI / Microsoft Copilot Studio | Embedded Analytics | Conversational BI & dashboard integration | Semantic Model Lock: Forces conversational AI agents to respect role-based access tokens and organizational definitions. |

| Numerai Ensembles | Crowdsourced Intelligence | Financial forecasting & risk modeling | Encrypted Signal Processing: Distributes highly encrypted corporate telemetry to a global data science network safely. |

4. Operationalizing Decentralized AI: A 3-Step Tactical Roadmap

How do you transition your brand from an insecure, centralized data loop into a highly resilient, decentralized business intelligence system this year? Follow this systematic deployment plan:

Step 1: Establish Clean Data Product Segmentation

Before deploying automated agents, your corporate data must be treated as a collection of structured, standardized Data Products. Break down the internal data walls between your marketing platforms, billing systems (like Stripe or a TikTok Shop affiliate matrix), and website backend databases.

Organize these disparate inputs into clean, domain-specific repositories with clearly defined semantic models, role-based access permissions, and rigorous data quality scoring standards.

Step 2: Implement a Quantized Local Inference Model

Do not attempt to push massive, multi-billion-parameter general large language models through your local business gateways. Use advanced model quantization techniques to compress specialized, verticalized analytical models down to efficient, low-power layouts (such as 8-bit or 4-bit configurations).

Deploy these lightweight models onto secure edge gateways or on-premise servers equipped with modern Neural Processing Units (NPUs), ensuring lightning-fast local inference without any external network round-trips.

[Raw Inbound Data Packet]

│

▼

┌──────────────────────────────────────┐

│ Localized NPU Edge Server Enclave │ ◄─── Runs Quantized Local Inference

├──────────────────────────────────────┤

│ * Executes Semantic Translation │

│ * Filters Anomaly Signatures │

│ * Purges Personally Identifiable Data│

└──────────────────┬───────────────────┘

│

▼

[Clean Metadata / Learning Weights Sent to Master Core]

Step 3: Configure Your Cryptographic Auditing Layer

Connect your domain data repositories using secure cryptographic orchestration software. When an autonomous AI agent executes an enterprise report or automates a logistical transaction, ensure the transaction parameters are stamped with a verifiable cryptographic signature. This creates a permanent, tamper-proof, and easily auditable execution ledger that satisfies both internal security directors and external compliance officers.

5. Critical Risks: Navigating the 2026 Vulnerabilities

Scaling a decentralized intelligence framework introduces unique security and training vectors that require rigorous oversight:

- Model Poisoning and Malicious Injection: Because decentralized AI models ingest training data updates from multiple distributed nodes, they are vulnerable to data poisoning attacks. If a single compromised node injects corrupted or falsified operational data into the training loop, it can warp the master model’s predictive capabilities. Organizations must implement Weighted Aggregation Algorithms and automated anomaly detection to isolate and neutralize errant node updates instantly.

- Model IP Theft from Third-Party Hosts: If you are running highly valuable, proprietary machine learning algorithms across decentralized compute markets to lower hosting overhead, your model weights face extraction risks. Ensure your models are encrypted during transit and executed strictly within verified Trusted Execution Environments (TEEs) to prevent third-party hardware operators from scraping your intellectual property.

- The Fragility of Open-Source Model Drift: Relying heavily on open-source, open-weight foundational models requires continuous testing. Because these models lack centralized corporate maintenance, variations in real-world business jargon or macro environmental trends can cause model drift over time. Implement continuous evaluation loops to benchmark local model output accuracy against human-vetted control datasets weekly.

6. The Infrastructure Synergy: Building the Sovereign Digital Enterprise

For the technology innovators, platform builders, and forward-thinking creators tracking trends on this blog, mastering decentralized AI is the ultimate shortcut to operational autonomy.

When your business intelligence is untethered from centralized cloud providers, your corporate architecture achieves absolute redundancy. You mirror the structural principles that govern high-performance edge hosting networks. Your data remains completely within your sphere of control, protected from arbitrary privacy updates, sudden cloud subscription price hikes, and server outages.

By taking your surplus online profits and utilizing them to construct an isolated, self-hosted, and cryptographically verified AI data infrastructure, you build an un-copyable competitive moat. You marry high-velocity web agility with the immutable, highly private, and deeply analytical wealth preservation mechanics of the global technical elite.

Read More⚡ Generative AI for Video Content: Best Tools for 2026

Conclusion: The Era of Governed Autonomy

The centralized AI paradigm was a necessary experimental bridge, but the future of sustainable enterprise analytics belongs exclusively to Decentralized AI. The ability to self-serve deep business intelligence via natural language, run complex predictive simulations directly on localized data products, and secure structural privacy using cryptographic enclaves has permanently redefined the relationship between code and corporate data.

For the ngwmore.com community, the path forward is clear: Transition your organization away from centralized data traps and construct an integrated, sovereign intelligence framework. By grouping your data assets into clean domain products, deploy quantized analytics models to localized NPU nodes, and mandate absolute traceabilty across your operational decision-making loops, you strip away infrastructure risk and unlock exponential scale.

The records of the global economy are distributed, the intelligence must be too. Is your enterprise running on sovereign software?